Clustering algorithm that models each cluster as a Gaussian

- The probability density function for observing a data point in our feature space

- is the number of Gaussians/classes/clusters

- The conditional distribution

- is the Multivariate Gaussian Distribution

- is the position of point

Parameter Estimation

- Indicator function for whether data point belongs to cluster .

- Kind of like the Kronecker Delta in this case

- Non-binary “soft” version, used when actually training the Gaussian Mixture Model

- Expanded by Bayes’ Theorem

- The weight associated with a particular Gaussian

- The probability of a particular class

- Proportion of points with to a particular

- The mean position of all points in cluster

- The covariance matrix for all points with

Training

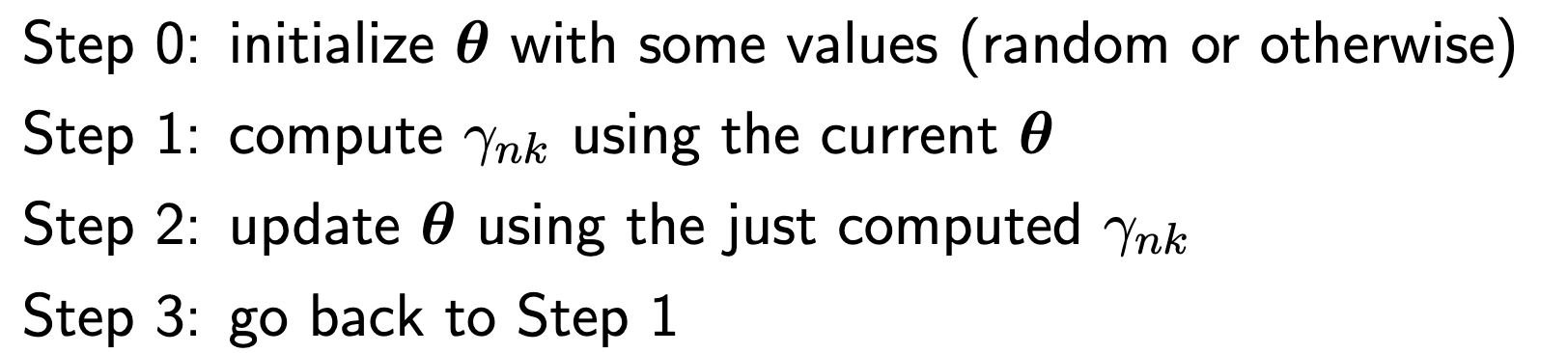

Called expectation-maximization

- Very similar to K-Means, except that it is considered a “soft” version and also with a non zero

- The E step corresponds to step 1 while the M step corresponds to step 2